What is query fan-out?

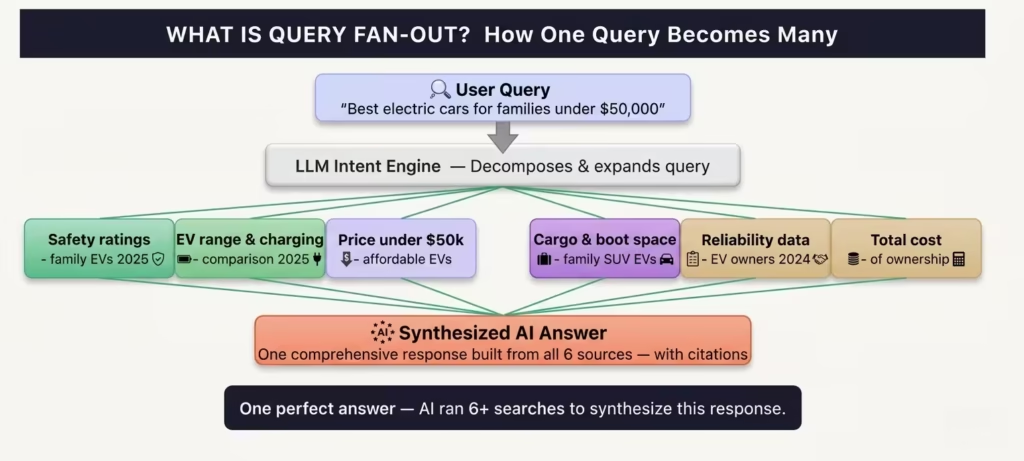

Query fan-out is an information retrieval technique where AI search systems decompose a single user query into multiple related sub-queries, retrieve content for each independently, and synthesize the results into one comprehensive AI-generated response.

In traditional search, Google attempts to find the single best-matching page for your query. In AI search, the system recognises that no single page can fully satisfy a complex question so it fans out across several related searches simultaneously before composing the answer.

Google’s Head of Search, Elizabeth Reid, confirmed this at Google I/O 2025, describing Google AI Mode’s query fan-out technique as a mechanism that breaks questions into subtopics and issues a multitude of queries simultaneously on the user’s behalf.

| Real world example: User query: “Best electric cars for families under $50,000″LLM sub-queries generated: (1) safest electric SUVs for families 2025, (2) electric car range comparison for families, (3) EVs priced under $50,000, (4) electric cars with most cargo space, (5) EV reliability rankings 2024, (6) total cost of ownership electric vehicle, (7) EV charging infrastructure home, (8) family electric car tax credits 2025 |

How LLMs expand one query into 6 to 8 sub queries

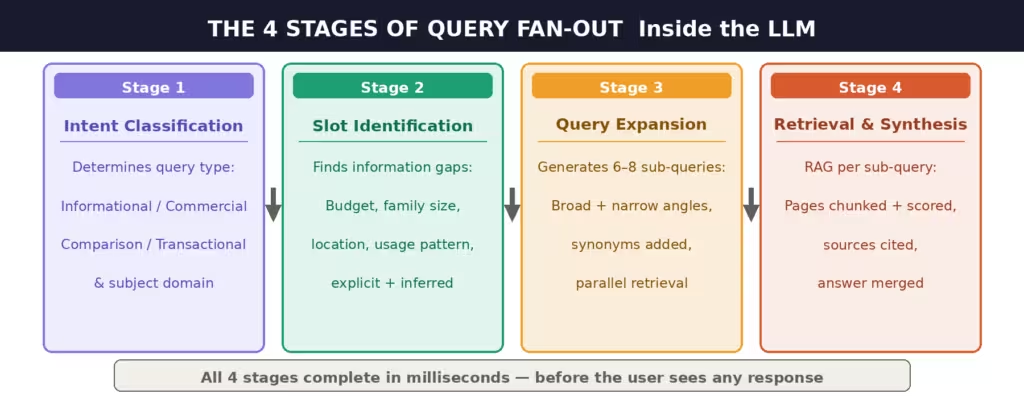

The query fan-out process is not random. LLMs follow a structured four-stage workflow every time a complex prompt arrives. Understanding these stages is the key to knowing what content you need to create.

| Stage | What happens |

| Stage 1: Intent classification | The LLM determines the query type (informational, commercial, comparison, transactional) and the subject domain (tech, health, finance, etc.). This dictates which retrieval strategy to apply. |

| Stage 2: Slot identification | AI identifies the information ‘slots’ it needs to fill. For a query like ‘best family EV under $50k’, slots include: budget range, family size needs, safety requirements, charging infrastructure, and ownership costs some explicit, some inferred. |

| Stage 3: Query expansion & diversification | The system generates 6 to 8 distinct sub-queries. Some are broader than the original, some narrower. It adds synonyms, related terms, and angle variations to maximize coverage of possible user intent. |

| Stage 4: Retrieval & synthesis | Each sub-query runs a separate web search (via RAG). Retrieved pages are chunked, scored for relevance, and synthesized. Only the most relevant passages are cited in the final answer. |

This entire process runs in milliseconds before the user sees any response. The sub-queries are invisible to the user but they are the real determinants of which content gets cited. Your page may rank #1 for the original keyword and still not appear in the AI answer if it does not surface for any of the sub-queries the LLM internally generated.

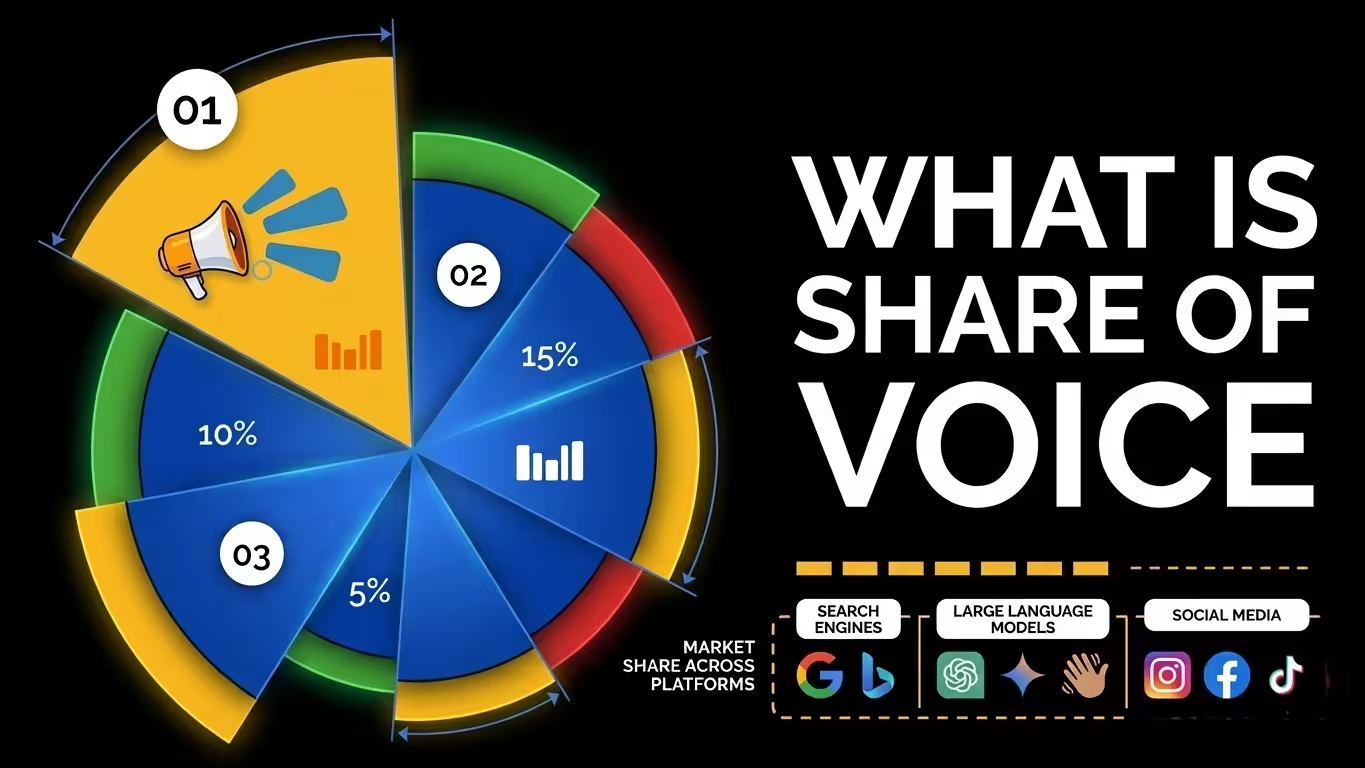

Why query fan-out matters: the data

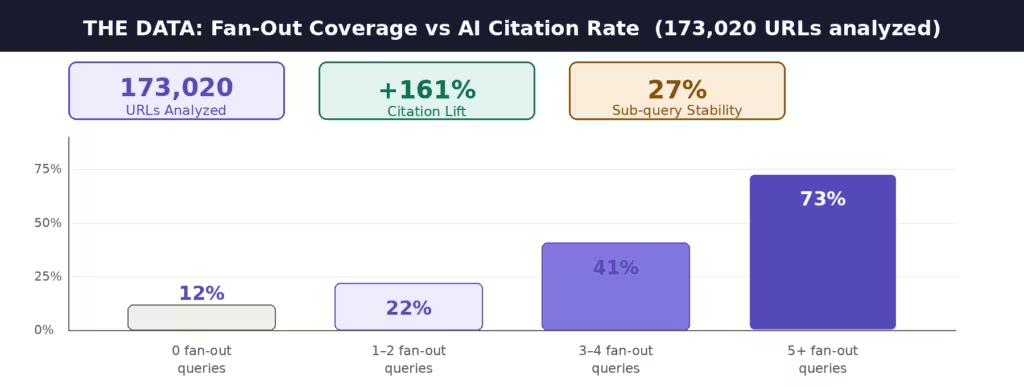

The strategic implications of query fan-out are not theoretical. Surfer SEO analyzed 173,020 URLs to quantify exactly how fan-out coverage affects AI citation rates. The results are decisive.

| Metric | Figure | What it means for you |

| URLs analyzed | 173,020 | Largest study of query fan-out to date |

| Citation lift (fan-out coverage) | +161% | Content ranking for 5+ sub-queries |

| Fan-out stability across platforms | 27% | Only 1 in 4 sub-queries repeat consistently |

| Sub-queries per prompt (avg) | 6 to 8 | Google AI Mode confirmed at Google I/O 2025 |

| Platforms using fan-out | 3+ | Google AI Mode, ChatGPT Search, Perplexity |

The 161% citation lift for content ranking across multiple fan-out queries is the most important figure in AI SEO right now. It tells you that the path to Google AI Overview inclusion is not better schema markup or an llms.txt file it is comprehensive topical coverage that naturally surfaces across the sub-queries LLMs generate.

The 27% stability rate is equally revealing. Only about one in four sub-queries repeat consistently across repeated searches for the same prompt. This means chasing specific fan-out queries manually is not a sustainable strategy broad topical authority is the durable solution.

Traditional search vs AI search: how they differ

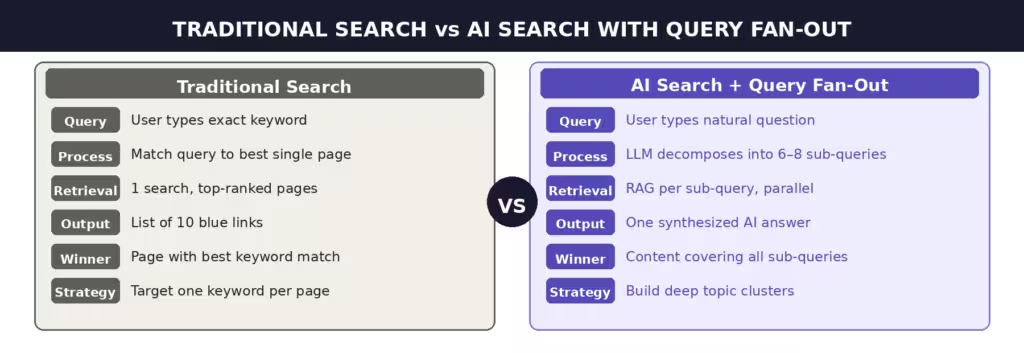

Understanding query fan-out requires understanding the fundamental architectural difference between how traditional search engines and AI search systems process queries.

Traditional search: Google matches your query to the single most relevant page in its index. Relevance is determined by signals like keyword match, backlinks, and on-page signals. The goal is to return the best page.

AI search with query fan-out: The LLM rewrites your query behind the scenes, expanding it into 6 to 8 related searches. It retrieves content for each search via Retrieval-Augmented Generation (RAG), processes the returned chunks, and generates a synthesized response. The goal is to compose a complete answer not to send you to a single page.

For SEO, this shift is profound. Visibility in AI search is earned by answering the sub-questions LLMs rely on not just the headline keyword.

How to optimize for query fan-out

The strategies that win in a query fan-out world are the ones that build genuine topical depth. Here is a prioritized action plan.

| Tactic | Why it works | Impact | Frequency |

| Build topic clusters | Natural coverage of sub-queries without guessing | High | Ongoing |

| Comprehensive, fact-dense content | Broad ranking across multiple angles | High | Per article |

| FAQ sections (H3 + direct answer) | Captures ‘People Also Ask’ & LLM Q&A pairs | High | Per article |

| Structured data (tables, lists) | Easy for LLMs to extract and cite | Medium | Per article |

| Track fan-out queries for priority KWs | Identify which sub-queries you’re missing | Medium | Monthly |

| Internal linking between cluster pages | LLM bots see topical authority signals | Medium | Ongoing |

The highest leverage move is building content clusters. When your site covers a topic deeply and interconnects those pages through internal links, LLMs naturally encounter your content across many of the sub-queries they generate without you ever having to guess which sub-queries they will run.

For critical keywords, you can reverse-engineer the fan-out directly: open ChatGPT, Perplexity, or Google AI Mode, enter your target keyword, and observe how the response is structured. The sub-topics the AI surfaces are a reliable signal of the sub-queries it generated. If your content does not cover those sub-topics, you have a fan-out gap.

Frequently asked questions

What is query fan-out in simple terms?

Query fan-out is the process where AI search systems split a single user question into multiple smaller searches, run each search separately, and combine the results into one answer. Instead of finding the best single page, AI search builds an answer from many sources one per sub-question.

Which platforms use query fan-out?

Google AI Mode, ChatGPT Search, and Perplexity all use forms of query fan-out. Google confirmed the technique publicly at Google I/O 2025. ChatGPT and Perplexity exhibit the same behavior when web search is enabled, though the exact sub-queries generated differ across platforms and even across repeated searches on the same platform.

How many sub-queries does an LLM generate per prompt?

Research and documented examples show that AI search systems typically generate between 6 and 12 sub-queries per complex prompt, with 6 to 8 being the most common range. Google’s Google I/O 2025 confirmation described issuing a multitude of queries simultaneously. The exact number depends on query complexity a simple factual question may generate fewer sub-queries than a multi-dimensional research prompt.

Does query fan-out affect Google traditional search rankings?

Query fan-out specifically affects AI-generated responses AI Overviews and AI Mode in Google, plus AI-native search tools like Perplexity and ChatGPT Search. However, the content strategy required to win in fan-out (comprehensive topic clusters, fact-dense writing, strong internal linking) also improves traditional rankings. The two goals are complementary, not in conflict.

What is a ‘fan-out gap’ and how do I find mine?

A fan-out gap is a sub-topic your content does not cover that AI systems commonly retrieve when processing queries in your space. To find yours: enter your target keyword into ChatGPT or Perplexity and observe the sub-topics covered in the response. Check whether your site has dedicated content for each sub-topic. Any gap is a fan-out gap. Prioritize the highest-traffic sub-topics first.

Is query fan-out the same as topic clustering?

They are related but not the same thing. Query fan-out is a technical process inside AI search systems. Topic clustering is a content strategy. The reason topic clustering is the leading strategy for query fan-out optimization is that comprehensive topical coverage naturally surfaces your content across the many sub-queries an LLM generates without requiring you to predict each one.

Conclusion

Query fan-out represents the most significant structural change to search relevance in a decade. When an AI search engine receives your users’ questions, it is not looking for your page it is looking for answers to 6 to 8 related questions, and then deciding which sources to cite in its synthesized response.

The brands that will dominate AI search are not the ones with the best single keyword ranking. They are the ones with the deepest topical coverage the sites that can answer whatever sub-query the LLM generates, not just the headline one.

Start by identifying your fan-out gaps on your most valuable keywords. Build or expand your topic clusters to close those gaps. Write comprehensive, fact-dense, FAQ-rich content that is structured for LLM extraction. And measure your citation rates across AI platforms not just your position in the blue links.

The race for AI search visibility has already started. The brands optimizing for query fan-out today will be the ones cited tomorrow.