This comprehensive guide covers everything performance marketers, developers, and business professionals need to understand about context windows: what they are, why they matter, how they work across leading AI models, and the strategic implications for choosing the right AI platform for your specific use case.

What is a Context Window in AI?

A context window is the maximum amount of text an AI language model can process and remember at one time, measured in tokens. Think of it as the AI’s working memory or short-term memory capacity. Just as humans can only hold about seven items in short-term memory before forgetting earlier information, large language models have a finite limit on how much text they can actively consider when generating responses.

The context window encompasses everything the model needs to track during an interaction: your current prompt, all previous messages in the conversation, system instructions from the AI company, any documents you’ve uploaded, and the response being generated. When this combined information exceeds the context window size, the model must either truncate earlier content or summarize it, potentially losing important details from the beginning of your conversation.

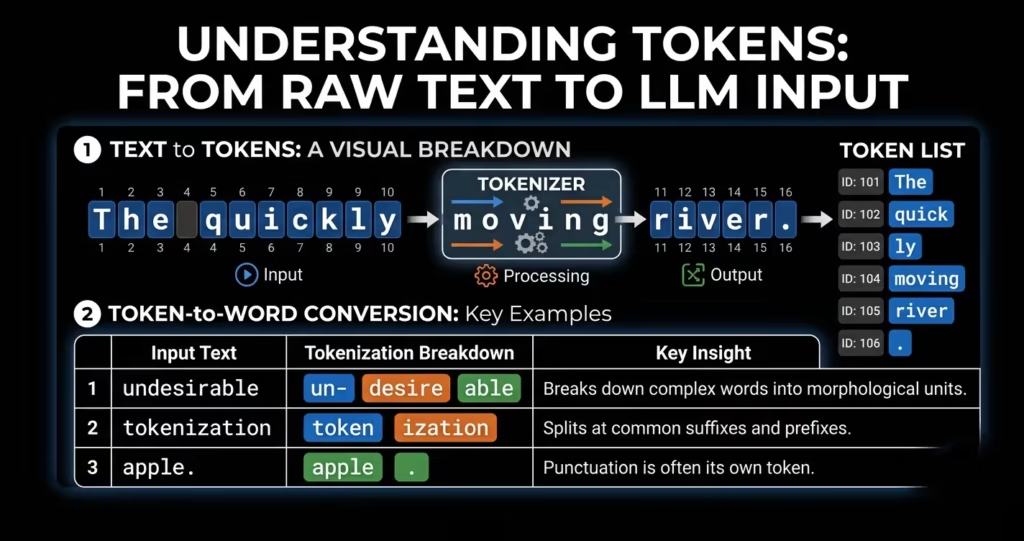

Understanding Tokens

Context windows are measured in tokens rather than words. A token represents a chunk of text that can be a single character, part of a word, a complete word, or even a short phrase, depending on the model’s tokenization method. As a general rule, one token equals approximately 0.75 words in English, meaning 1,000 tokens converts to roughly 750 words. However, this ratio varies significantly across languages, with languages like Chinese or Japanese using different tokenization patterns than English.

Why Context Windows Matter for AI Performance

Context windows represent a critical performance factor that directly impacts AI accuracy, coherence, and practical usefulness across real-world applications. The size and quality of a model’s context window determines whether it can handle complex enterprise workflows or falls short when processing substantial information.

Impact on Accuracy and Hallucinations

Larger context windows enable AI models to maintain higher factual accuracy by keeping more relevant information in active memory. When models can reference complete documents rather than truncated summaries, they produce fewer hallucinations and incorrect statements. Research published in 2024 demonstrated that extending context windows from 4,000 to 100,000 tokens reduced hallucination rates by over 40 percent for document analysis tasks.

Conversation Continuity

Context windows determine how long a conversation can continue before the AI forgets earlier discussion points. A model with an 8,000-token window might lose track of your project requirements mentioned at the start of a lengthy conversation, while a 200,000-token window maintains coherence across hours of interaction. This continuity proves essential for complex projects requiring iterative refinement and detailed back-and-forth exchanges.

Document Processing Capabilities

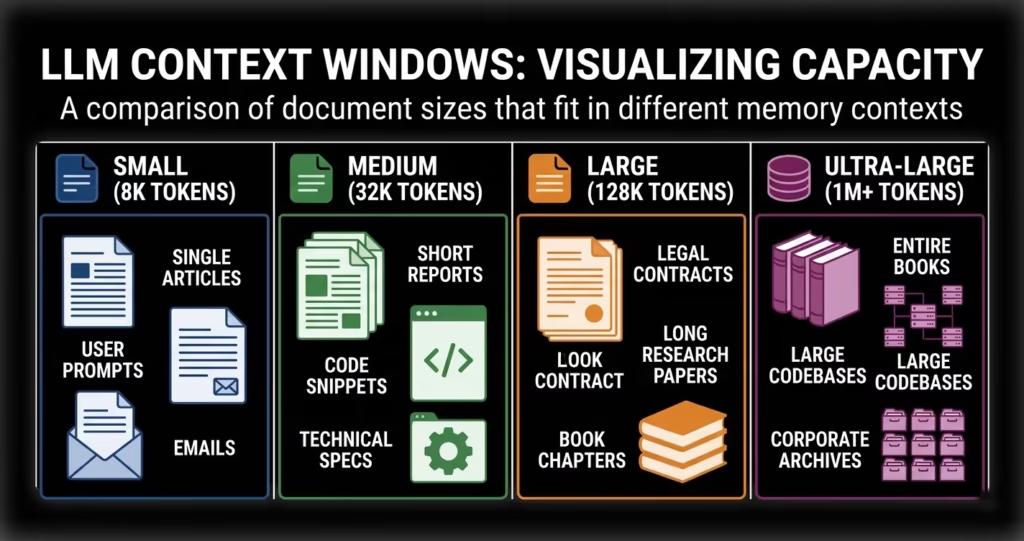

The maximum document size an AI can analyze in a single operation depends entirely on its context window. With a 4,000-token limit, you’re restricted to analyzing about 3,000 words at once. A 1,000,000-token window enables processing entire books, comprehensive legal contracts, complete software codebases, or years of email correspondence without splitting the content across multiple sessions.

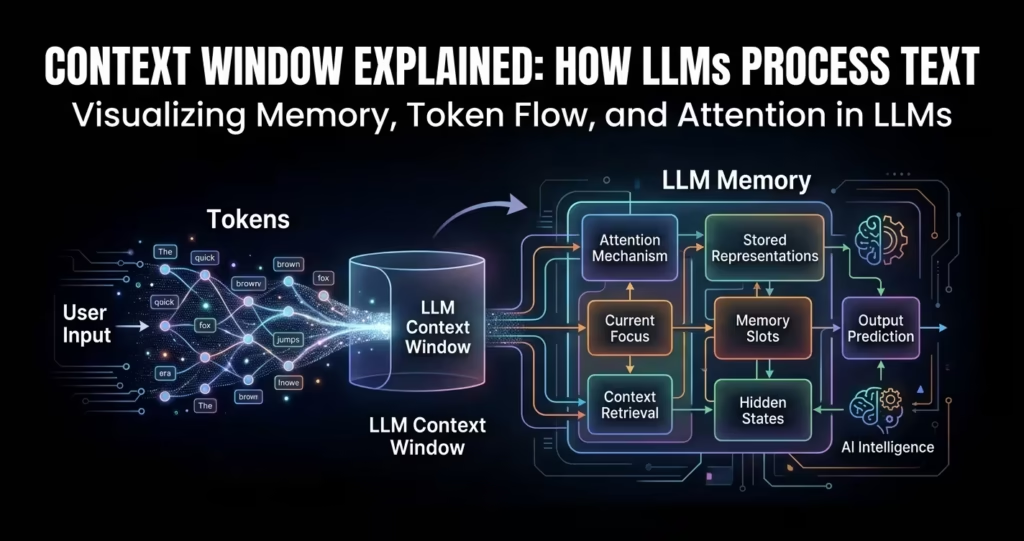

How Context Windows Actually Work: The Technical Foundation

Understanding how context windows function requires examining the transformer architecture that powers modern large language models. The key mechanism behind context windows is the attention mechanism, which allows models to weigh the relevance of different tokens when generating each new token in a response.

The Attention Mechanism

Transformer models use self-attention to process sequences of tokens. When generating a response, the model computes relationships between every token and every other token in the context window. This attention mechanism examines which previous tokens are most relevant for predicting the next token. The computational requirements scale quadratically with sequence length: doubling the context window requires four times the processing power, which explains why longer context windows demand more resources and time.

Positional Encoding

Since transformers process all tokens simultaneously rather than sequentially, they rely on positional encoding to understand word order and sequence structure. This encoding system assigns each token a position marker within the context window, allowing the model to distinguish between “The dog chased the cat” and “The cat chased the dog” even though both contain identical tokens in different orders.

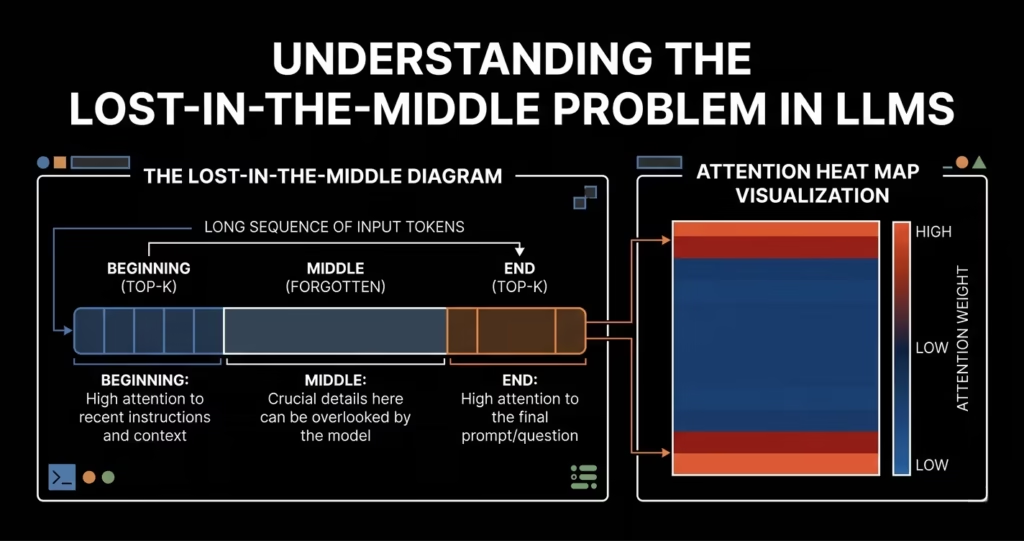

The Lost-in-the-Middle Problem

Research by Stanford University in 2023 revealed a significant challenge with long context windows: models reliably recall information from the beginning and end of their context but struggle with details buried in the middle. This “lost-in-the-middle” phenomenon means that simply having a large context window doesn’t guarantee the model will effectively use all available information. Models perform best when critical information appears near the start or conclusion of the input context.

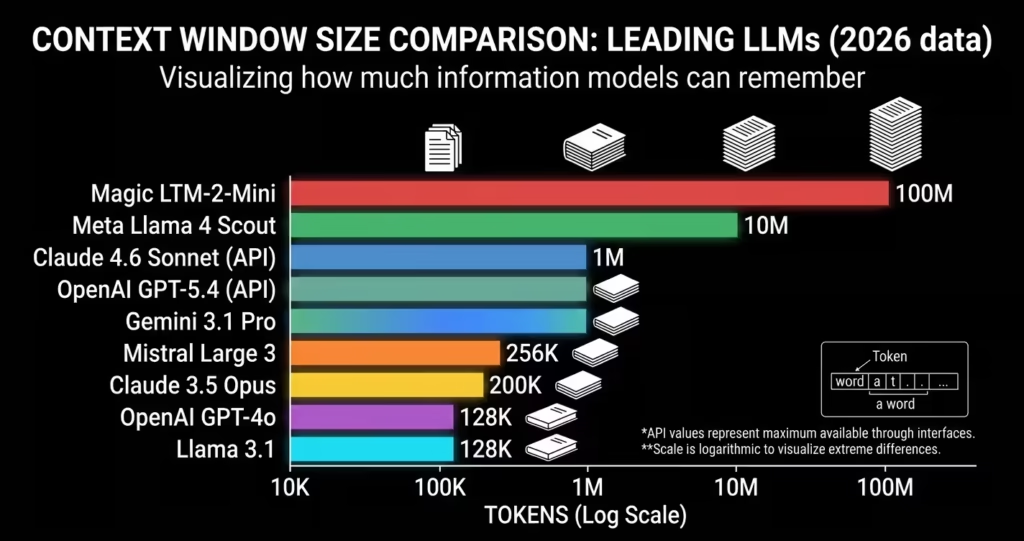

Context Window Sizes Across Leading AI Models (April 2026)

The AI industry has witnessed an explosive expansion in context window capabilities over the past three years. Understanding the current landscape requires examining both the advertised specifications and real-world performance characteristics of leading models.

| AI Model | Context Window | Approximate Pages | Developer |

| Gemini 3 Pro | 10,000,000 tokens | 13,330 pages | |

| Llama 4 Scout | 10,000,000 tokens | 13,330 pages | Meta |

| Gemini 2.5 Pro | 1,000,000 tokens | 1,333 pages | |

| Claude Opus 4.6 | 200,000 tokens | 265 pages | Anthropic |

| GPT-5.2 | 400,000 tokens | 533 pages | OpenAI |

| GPT-4o | 128,000 tokens | 170 pages | OpenAI |

| GPT-3.5 (original) | 4,096 tokens | 5 pages | OpenAI |

Table 1: Current context window sizes across major AI models as of April 2026

Key Observations from the Current Landscape

The competition between AI providers has driven context windows from 4,000 tokens in November 2022 to 10 million tokens by early 2026, representing a 2,500x increase in just over three years. Google’s Gemini currently leads with its 10 million token capacity in the Gemini 3 Pro model, enabling analysis of entire corporate knowledge bases or years of documentation in a single session. Meta’s open-source Llama 4 Scout matches this capacity while offering deployment flexibility for organizations requiring data sovereignty.

Anthropic’s Claude maintains a more conservative 200,000-token window but emphasizes consistent performance across its full range with less than 5 percent accuracy degradation throughout the context. OpenAI’s strategy targets the middle ground with 128,000 tokens in standard models and 400,000 tokens in the premium GPT-5 family, focusing on reliability and broad ecosystem integration.

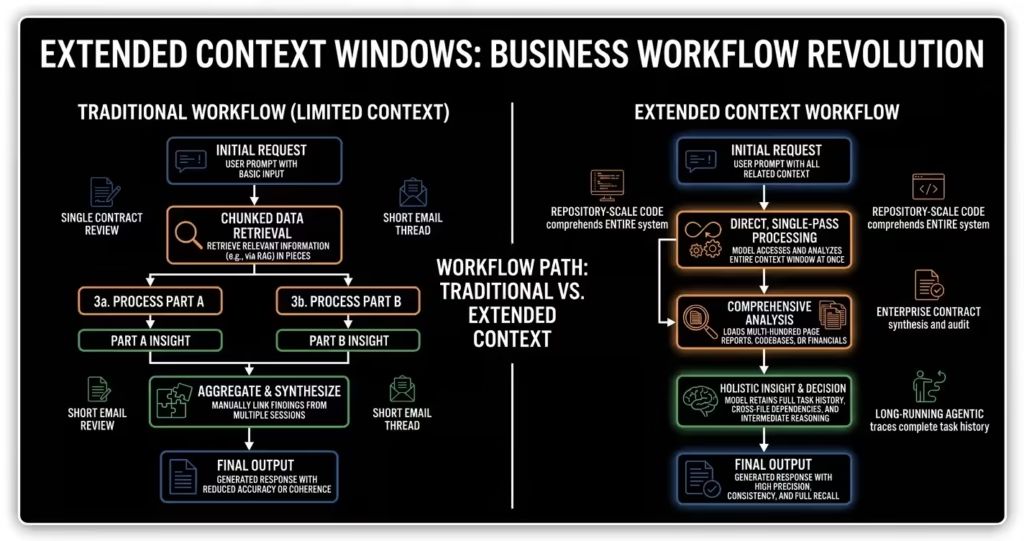

Real-World Applications Enabled by Extended Context Windows

Extended context windows unlock practical capabilities that transform how professionals work with AI across diverse industries and use cases. Understanding these applications helps organizations identify strategic opportunities for AI integration.

Software Development and Code Analysis

Developers working with large codebases can now load entire projects into context, enabling AI assistants to understand architectural patterns, identify cross-file dependencies, and suggest optimizations that span multiple modules. A typical enterprise application with 500,000 lines of code fits comfortably within modern context windows, allowing comprehensive analysis without manual file selection. This capability proves particularly valuable for security audits, refactoring initiatives, and onboarding new team members to complex systems.

Legal Document Review and Contract Analysis

Legal professionals leverage extended context windows to analyze multi-party agreements, compare contract versions across years, and identify inconsistencies in complex legal frameworks. A 200,000-token window accommodates most standard contracts plus relevant case law and regulatory guidance, enabling AI to provide contextually informed analysis rather than isolated clause review. Law firms report 60 percent time savings on initial contract review when using long-context AI tools.

Academic Research and Literature Review

Researchers use million-token context windows to synthesize findings across dozens of academic papers simultaneously, identifying contradictions, gaps in existing research, and emerging trends that might escape notice during manual review. Graduate students conducting literature reviews can load 50 to 100 papers into context and ask comparative questions about methodologies, findings, and theoretical frameworks, accelerating the research process from weeks to hours.

Customer Service and Support Documentation

Enterprise support teams deploy AI chatbots powered by long-context models to provide accurate assistance by loading complete product documentation, troubleshooting guides, and historical support tickets into context. This approach, known as retrieval-augmented generation when combined with intelligent search, dramatically reduces hallucinations and ensures customers receive verified information rather than AI speculation. Companies report first-contact resolution rates improving from 40 percent to 75 percent after implementing long-context AI support systems.

Challenges and Limitations of Large Context Windows

While extended context windows deliver significant capabilities, they introduce technical challenges and practical trade-offs that organizations must consider when designing AI systems and workflows.

Computational Costs and Processing Time

Processing longer contexts requires exponentially more computational resources due to the quadratic scaling of attention mechanisms. Doubling context length quadruples processing requirements, translating to both longer response times and higher costs per query. Organizations deploying long-context AI must budget for 3x to 5x higher infrastructure costs compared to shorter-context implementations. Response latency increases proportionally, with 1-million-token queries taking several minutes versus seconds for shorter inputs.

Accuracy Degradation Across Long Contexts

Research demonstrates that effective context capacity typically runs at 60 to 70 percent of advertised limits before accuracy meaningfully degrades. A model advertised with a 200,000-token window might maintain peak performance only up to 140,000 tokens, with precision declining thereafter. This phenomenon, sometimes called context rot, means organizations should establish conservative limits well below technical maximums for production deployments requiring high reliability.

Cost Per Query Increases

Most AI providers charge per token processed, making long-context queries substantially more expensive than shorter interactions. Analyzing a 500-page document in a single query might cost 50 to 100 times more than processing a single page. Organizations must carefully evaluate whether comprehensive context justifies the cost premium or whether intelligent chunking and retrieval strategies deliver comparable results at lower expense.

Security and Privacy Considerations

Loading sensitive documents into commercial AI services raises data governance concerns, particularly for regulated industries like healthcare, finance, and government. While major providers offer enterprise tiers with enhanced security guarantees, organizations must verify that long-context processing doesn’t compromise compliance requirements. Self-hosted open-source models offer one solution but introduce infrastructure complexity and maintenance overhead.

Context Window Optimization Strategies and Best Practices

Organizations implementing AI systems must develop intelligent approaches to context management that balance capability against cost and performance constraints. These strategies maximize value while mitigating the limitations of extended context processing.

Retrieval-Augmented Generation (RAG)

RAG systems intelligently search through large document repositories and load only the most relevant segments into the context window rather than brute-force loading everything. This approach creates effectively infinite context by retrieving information on demand while keeping actual context window usage lean. Modern RAG implementations combine vector search, semantic chunking, and dynamic context assembly to maintain accuracy while controlling costs.

Progressive Summarization

For long conversations or multi-step workflows, progressive summarization condenses older context into concise summaries while preserving recent details in full. This technique maintains task continuity without exceeding context limits. An AI agent working on a 50-step task might keep the last 5 steps in complete detail, summarize steps 6 through 45 into key facts, and preserve the original user intent throughout, effectively managing a context budget that would otherwise overflow.

Context Engineering

Context engineering has emerged as a specialized discipline focused on intentionally constructing the AI’s information environment. This practice involves careful selection of what enters the context window, strategic positioning of critical information at the beginning or end to avoid the lost-in-the-middle problem, and continuous monitoring of context utilization patterns. Organizations building production AI systems now hire engineers specifically skilled in context optimization, system architecture, and information retrieval.

Observability and Monitoring

Production AI deployments require observability tools that trace exactly what information occupies the context window at each interaction step, monitor utilization against limits, and detect degradation patterns before they impact user experience. Platforms like LangSmith, Langfuse, and Arize provide detailed visibility into context consumption, enabling teams to identify optimization opportunities and catch issues early in development cycles.

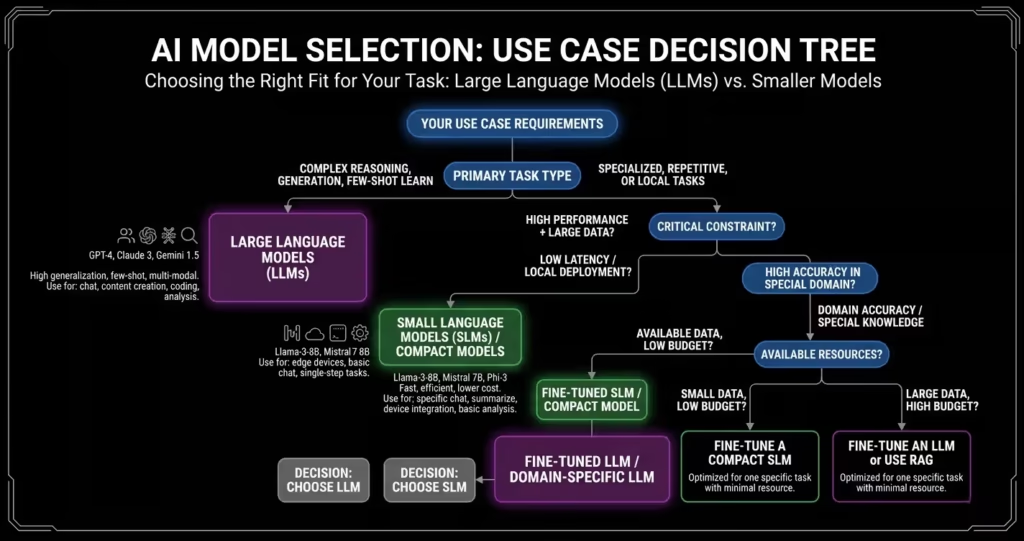

Choosing the Right AI Model Based on Context Window Requirements

Selecting an appropriate AI model requires matching context window capabilities against specific use case demands while considering cost, performance, and reliability factors beyond raw token capacity.

For Document Processing Under 50,000 Words

Standard models with 128,000-token windows prove sufficient for most business documents, technical reports, and typical enterprise content. GPT-4o and similar offerings deliver excellent performance at competitive pricing for this tier. Organizations processing contracts, proposals, and standard documentation rarely justify premium long-context models when 128K suffices.

For Software Development and Code Analysis

Development workflows benefit from 200,000 to 400,000-token windows that accommodate substantial codebases plus documentation. Claude Opus 4.6 with its 200,000-token capacity and strong code comprehension delivers consistent results for development teams. GPT-5 family models offering 400,000 tokens provide additional headroom for enterprise-scale applications with extensive dependency graphs.

For Comprehensive Research and Multi-Document Analysis

Academic research, competitive intelligence, and cross-document synthesis benefit from million-token windows that enable simultaneous analysis of dozens of sources. Gemini 2.5 Pro’s 1-million-token capacity handles comprehensive literature reviews, while the 10-million-token Gemini 3 Pro supports analysis spanning hundreds of research papers or years of corporate documentation.

For Production Applications with Reliability Requirements

Business-critical applications prioritizing consistency over maximum capacity should consider models optimized for reliable performance across their advertised range. Claude’s emphasis on maintaining accuracy throughout its context window makes it valuable for regulated industries and applications where errors carry significant consequences. Establish conservative limits at 60 to 70 percent of maximum capacity to maintain quality guarantees.

Future Directions: What’s Next for Context Windows in AI

The rapid expansion of context windows shows no signs of slowing as researchers push boundaries through architectural innovations, training improvements, and hardware advancements. Several emerging trends will shape the next phase of development beyond 2026.

Infinite Context Through Advanced Architectures

Researchers are developing new attention mechanisms that break free from quadratic scaling limitations. Techniques like linear attention, sparse attention patterns, and hierarchical context management promise near-infinite context capabilities without proportional cost increases. Early experimental models demonstrate 100-million-token processing with computational requirements comparable to current 1-million-token systems, suggesting practical unlimited context may arrive within the next two to three years.

Multimodal Context Integration

Current context window discussions focus primarily on text tokens, but the next frontier involves integrating images, audio, video, and structured data into unified context windows. Future models will process complex multimedia documents with the same depth currently possible with text, enabling comprehensive analysis of presentations with embedded charts, video content with transcripts, and rich media documentation without format-specific limitations.

Specialized Context Models

The industry is moving toward specialized models optimized for specific types of long-context processing. Legal-specific models trained on contract structures, medical models optimized for clinical documentation, and code-focused models tuned for software repositories will deliver superior performance within their domains compared to generalist long-context models.

Edge Deployment and Local Processing

Advances in quantization and model compression are enabling long-context models to run on consumer hardware. Organizations will gain options to deploy 100,000 to 500,000-token models locally for privacy-sensitive applications, combining the benefits of extended context with data sovereignty and offline operation. This democratization of long-context capability reduces dependence on cloud providers while addressing compliance requirements.

Frequently Asked Questions About Context Windows

What happens when you exceed the context window limit?

When input exceeds the context window, the AI model must either truncate the earliest portions of the conversation or document, losing access to that information, or return an error preventing processing. Most production systems implement automatic truncation, silently dropping older content to fit within limits. This can cause the model to lose critical context from earlier in the conversation or miss important details from the beginning of long documents. Advanced implementations use summarization to compress older context rather than simply discarding it.

How do I calculate how many tokens my text contains?

Token count varies by model and language, but a general approximation is that one token equals roughly 0.75 words in English, meaning 1,000 words converts to approximately 1,330 tokens. For precise counts, use the tokenizer tools provided by AI platforms: OpenAI offers an online tokenizer at platform.openai.com/tokenizer, while most API providers include token counting functions in their SDKs. These tools show exactly how your specific text gets tokenized by that particular model.

Does a larger context window always mean better performance?

Not necessarily. While larger context windows enable processing more information, they don’t automatically guarantee better results. Models can suffer from accuracy degradation with very long contexts, particularly for information buried in the middle. Additionally, larger contexts increase processing time and costs significantly. The optimal context window size depends on your specific use case; many tasks perform better with focused, relevant context rather than maximum capacity loaded with extraneous information.

Can I increase the context window of an AI model?

Context windows are determined by model architecture and training, so end users cannot directly increase limits for commercial API services. However, you can employ techniques like retrieval-augmented generation to effectively extend usable context by intelligently loading relevant information on demand. For open-source models, some fine-tuning approaches can extend context windows beyond original training limits, though this requires significant technical expertise and computational resources. Self-hosted deployments offer more flexibility but come with infrastructure complexity.

Which AI model has the largest context window in 2026?

As of April 2026, Google’s Gemini 3 Pro and Meta’s open-source Llama 4 Scout both offer 10 million token context windows, the largest commercially available capacity. These models can process approximately 13,330 pages of text in a single operation, enough for entire book series, comprehensive corporate knowledge bases, or years of documentation. However, practical applications may not require or benefit from such extreme capacity, as effective context usage typically runs at 60 to 70 percent of advertised limits before performance degrades.

How much does using a large context window cost?

Costs scale with token consumption, making large context queries substantially more expensive than shorter interactions. Most providers charge per million tokens processed. For example, processing 500,000 tokens might cost 50 to 100 times more than processing 5,000 tokens, depending on the model and provider pricing structure. Organizations should carefully evaluate whether comprehensive context justifies the cost premium or whether intelligent chunking strategies deliver comparable results at lower expense. Free tiers typically impose strict token limits unsuitable for long-context applications.

What is the lost-in-the-middle problem?

The lost-in-the-middle problem refers to a well-documented phenomenon where AI models reliably recall information from the beginning and end of long contexts but struggle with details buried in the middle portions. Research by Stanford University demonstrated that models perform best when relevant information appears near the start or conclusion of input contexts. This means simply loading maximum context doesn’t guarantee the model will effectively use all available information. Context engineering practices address this by strategically positioning critical information at optimal locations.

Should I use RAG or rely on large context windows?

The choice depends on your specific use case requirements. Large context windows work best for tasks requiring holistic understanding of complete documents, such as analyzing contracts, reviewing code, or synthesizing research papers where all information relates to the query. RAG excels when working with frequently updated information, extremely large document repositories, or scenarios where only small portions of available data apply to each query. Many production systems combine both approaches: RAG retrieves relevant segments from vast libraries, then loads those segments into generous context windows for comprehensive analysis.

Conclusion: Strategic Implications of Context Windows for AI Adoption

Context windows have emerged as a defining characteristic separating capable AI systems from truly transformative ones. The explosive growth from 4,000 tokens in late 2022 to 10 million tokens by early 2026 represents more than technical achievement; it signals a fundamental shift in how AI systems understand, process, and generate complex information.

Organizations evaluating AI platforms must look beyond advertised token counts to understand real-world performance characteristics, cost implications, and reliability across full context ranges. The largest context window doesn’t automatically deliver the best solution for every use case. Success requires matching specific business requirements against model capabilities, implementing intelligent context management strategies, and developing internal expertise in context engineering and optimization.

As context windows continue expanding and architectural innovations promise near-infinite capabilities within the next few years, the competitive advantage will shift from raw capacity to effective utilization. Organizations that develop sophisticated approaches to context management, combine extended windows with retrieval strategies, and build teams skilled in this emerging discipline will extract maximum value from AI investments while controlling costs and maintaining quality standards.

The context window race represents an ongoing evolution rather than a destination. Performance marketers, developers, and business leaders who understand these dynamics position themselves to leverage AI capabilities more effectively than competitors constrained by outdated assumptions about what’s possible with machine intelligence.