What Is RAG in AI? The Simple Definition

Retrieval-Augmented Generation (RAG) is an AI framework that connects a large language model (LLM) to an external knowledge base, allowing it to fetch relevant information before generating a response. The term was first introduced in a 2020 research paper by Meta AI (then Facebook AI Research), co-authored by Patrick Lewis and collaborators from University College London and New York University.

The core problem RAG solves is this: every LLM has a training cutoff date. Everything the model knows was locked in at that moment. Ask it about a news event from last week, a document it has never seen, or proprietary company data, and it will either hallucinate an answer or admit it does not know.

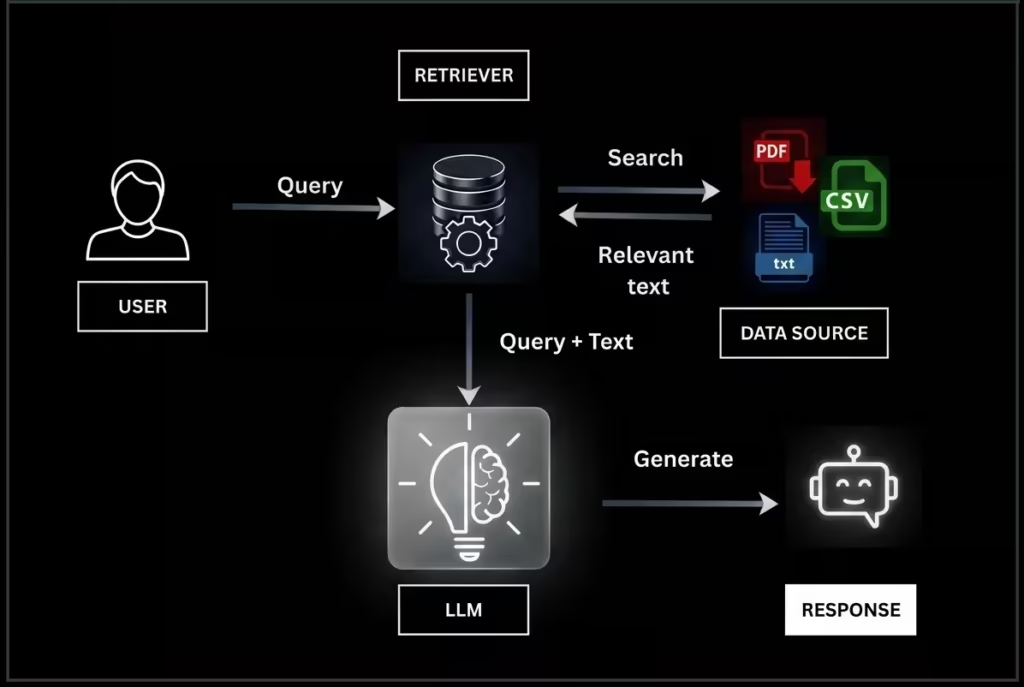

RAG fixes this by giving the model an open book to reference, rather than forcing it to work from memory alone. In short, RAG equals Retrieve plus Augment plus Generate:

- Retrieve: Find the most relevant documents or data chunks from an external source.

- Augment: Inject that retrieved information into the prompt sent to the LLM.

- Generate: The LLM uses both its training knowledge and the retrieved context to produce an accurate, grounded answer.

How RAG Works: Step-by-Step Breakdown

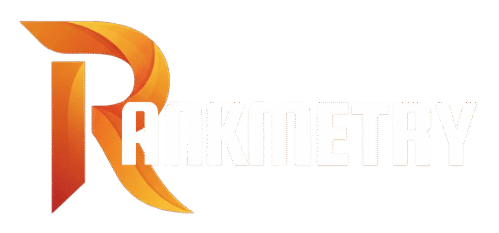

Understanding RAG requires following the data flow from the moment a user types a query to the moment the model responds.

- The user submits a query. For example: ‘What are our Q3 refund rates?’ or ‘What happened in AI news today?’

- The query is converted into a vector embedding, a numerical representation that captures the semantic meaning of the question.

- The retrieval system searches a vector database or web index for documents whose embeddings are mathematically closest to the query embedding.

- The top matching documents or text chunks are returned from the knowledge base.

- The retrieved content is injected into the prompt alongside the original user query. This is the augmentation step.

- The LLM receives the enriched prompt and generates a response grounded in both its training knowledge and the retrieved data.

- The response is returned to the user, often with source citations so claims can be independently verified.

The entire process typically runs in under two seconds in consumer AI products. In enterprise deployments, the knowledge base can be a private document repository, a CRM, internal wikis, or real-time databases updated continuously.

Why RAG Matters: Key Benefits

| Benefit | What It Solves | Business Impact |

| Reduces Hallucinations | LLMs fabricating facts from training data | Higher accuracy and trust in AI outputs |

| Real-Time Knowledge | Training cutoff producing outdated responses | Current answers without retraining the model |

| Cost Efficiency | Full model retraining is expensive and slow | Update the knowledge base, not the model |

| Source Citations | No transparency in standard LLM outputs | Users can verify every claim independently |

| Domain Specificity | Generic models lacking proprietary knowledge | Instant access to internal company data |

| Reduced Legal Risk | Hallucinated content causing compliance issues | Grounded outputs with traceable sources |

RAG vs Fine-Tuning: What Is the Difference?

RAG and fine-tuning are often confused, but they solve different problems. Fine-tuning adjusts the weights of the model itself by training it on new domain-specific data. RAG does not change the model. It expands what the model can see at inference time by connecting it to an external, updatable knowledge base.

RAG is the better choice when data changes frequently. Fine-tuning is better when you need the model to learn a consistent tone, format, or domain-specific reasoning pattern. Most production AI systems in 2025 and 2026 combine both approaches.

| Factor | RAG | Fine-Tuning |

| Cost | Low (update the knowledge base only) | High (requires GPU compute for retraining) |

| Speed to deploy | Fast (hours to days) | Slow (days to weeks) |

| Handles live or changing data | Yes | No |

| Source citations in output | Yes | No |

| Improves reasoning style | No | Yes |

| Requires labeled training data | No | Yes |

RAG in Major LLMs: ChatGPT, Claude, Perplexity, Grok, and Gemini

Each major AI platform implements RAG differently. Some are built on RAG as their core architecture. Others use it as an optional enhancement layer. Here is a platform-by-platform breakdown with concrete examples.

1. ChatGPT (OpenAI): Deep Research and File-Based RAG

ChatGPT uses RAG through two primary mechanisms. The first is file uploads: when you upload a PDF, spreadsheet, or document to ChatGPT, the model applies RAG to retrieve relevant chunks from that file when answering your questions. The second is the Deep Research feature, introduced in early 2025 for Plus subscribers.

Deep Research functions as an agentic RAG pipeline. ChatGPT autonomously queries the web, retrieves and reads multiple sources, synthesizes the information, and produces a cited report. It runs in Quick and Deep modes, with Deep providing more thorough multi-source retrieval suited to financial analysis, technical research, and competitive intelligence.

Real Example: Uploading a 60-page company SOP to ChatGPT and asking ‘What is our escalation policy for billing disputes?’ ChatGPT retrieves the relevant section and answers with a page reference, rather than generating a generic policy from training data.

Limitation (as of early 2026): Free tier users have minimal access to Deep Research. Plus users are capped at 30 deep searches per month.

2. Claude (Anthropic): Long-Context RAG Through Projects and Document Analysis

Claude approaches RAG differently from other platforms. Rather than relying primarily on web retrieval, Claude is optimized for document-level RAG using its large context window. Claude Sonnet 4.6 and Opus 4.6 can process hundreds of pages of documents in a single session, treating the entire uploaded document set as a live knowledge base within the conversation.

The Projects feature on Claude.ai extends this further. Users can store background documents, brand guidelines, SOPs, and reference materials inside a Project, and Claude retrieves relevant context from that persistent knowledge base across multiple conversations.

Real Example: A legal team uploads 15 contracts to Claude. When asked ‘Which agreements include a 30-day termination clause?’ Claude retrieves and cross-references all 15 documents, identifies matching clauses, and cites the specific contract and section number for each.

Real Example: A marketing team stores brand voice guidelines in a Claude Project. Every piece of copy generated in that project is automatically grounded in the brand document without needing to re-upload it each session.

3. Perplexity AI: Search-Native RAG as Core Architecture

Perplexity AI is architecturally the most RAG-native platform on this list. Unlike ChatGPT or Claude, which are primarily generative models with RAG as an add-on layer, Perplexity is built as a retrieval-first system. Real-time web search is the foundation, and AI synthesis is the layer that processes and presents what was retrieved.

Every Perplexity response includes inline footnote citations linked to the live source URLs. This makes Perplexity the strongest platform for fact-checking, academic research, and any query requiring verified, current information with traceable sources.

Real Example: A researcher asks ‘What are the latest FDA approvals for GLP-1 drugs in 2026?’ Perplexity searches the web in real time, retrieves FDA announcement pages, medical journal summaries, and news coverage, and synthesizes a cited answer with links to every source used.

Limitation: Perplexity is weaker at long-form creative writing and coding tasks. Its strength is sourced research, not content generation.

4. Grok (xAI): Real-Time Social Media RAG from X

Grok is xAI’s AI platform, deeply integrated with X (formerly Twitter). Its distinct RAG capability is real-time retrieval from the X/Twitter data stream, meaning it can surface current posts, trending conversations, and breaking news from the platform as grounding context for its answers.

This makes Grok uniquely suited for social media intelligence tasks: monitoring brand mentions, tracking trending topics, understanding public sentiment around a news event, and surfacing real-time audience reactions.

Real Example: A social media manager asks Grok ‘What is the current sentiment around [brand name] on X today?’ Grok retrieves recent posts mentioning the brand and synthesizes a sentiment summary grounded in live social data.

Limitation: Social media posts are not authoritative sources. Grok can retrieve and synthesize unverified claims because its retrieval corpus includes unmoderated social content. For fact-sensitive research, Perplexity or Claude are more reliable choices.

5. Gemini (Google): RAG Grounded in Google Search and Workspace

Gemini implements RAG through two distinct channels. The first is grounding via Google Search: Gemini can be connected to live Google Search, meaning responses draw from the same index that powers the world’s largest search engine.

The second channel is Google Workspace integration. When Gemini is used within Gmail, Google Docs, Google Drive, or Google Sheets, it retrieves relevant content directly from the user’s files. Asking Gemini ‘Summarize the last three emails from our lead investor’ triggers live retrieval from Gmail. Asking ‘What did we agree to in the partnership contract?’ retrieves the relevant Google Doc.

Real Example: A business analyst uses Gemini in Google Sheets with 50,000 rows of sales data. Gemini retrieves the relevant data ranges when asked “Which regions underperformed Q3 targets by more than 15%?” and generates an analysis grounded in the actual spreadsheet, not a fabricated summary.

Note on Google AI Overviews: Google’s AI Overviews in search results use the same RAG principles but draw from the existing Google index, meaning traditional SEO signals including backlinks and domain authority still influence which sources get cited in AI-generated responses.

RAG Implementation Comparison Across Major LLMs (2026)

| Platform | Primary RAG Method | Best RAG Use Case | Key Limitation |

| ChatGPT | Deep Research (web) + file uploads | Multi-source research reports and document Q&A | Deep Research capped at 30/month on Plus tier |

| Claude | Long-context documents + Projects | Contract analysis, internal doc Q&A, brand memory | Limited native real-time web retrieval capability |

| Perplexity | Real-time web search (native RAG) | Fact-checking, academic research, current events | Not optimized for long-form creative generation |

| Grok | Live X/Twitter data stream retrieval | Social sentiment, trend tracking, breaking social news | Sources are unverified social posts, not authoritative |

| Gemini | Google Search + Workspace file retrieval | Google ecosystem workflows, enterprise data analysis | Weaker at standalone coding compared to Claude |

Real-World RAG Use Cases by Industry

RAG is already embedded in tools that professionals use daily. Here is how RAG is applied across industries in 2026.

- Healthcare: Hospitals deploy RAG to connect LLMs to clinical guidelines databases. Clinicians ask natural language questions and receive answers grounded in the hospital’s own protocols, not generic web information.

- Legal: Law firms use RAG to connect LLMs to case law databases and internal document repositories. Attorneys query thousands of contracts and receive answers with specific clause citations in seconds.

- Finance: Investment teams connect LLMs via RAG to earnings call transcripts, SEC filings, and market data feeds. Analysts get real-time, source-cited summaries without manually reading every filing.

- E-commerce: Customer support chatbots use RAG to retrieve real-time order status, return policies, and product specifications from internal databases, eliminating hallucinated shipping timelines and incorrect product details.

- Marketing and SEO: Content teams use RAG-enabled tools to ground AI-generated content in current brand guidelines and live competitor data, reducing the risk of publishing outdated or off-brand copy.

- Human Resources: HR chatbots answer employee questions about leave policies, benefits, and payroll by retrieving the actual policy documents from the company knowledge base.

Frequently Asked Questions About RAG in AI

What does RAG stand for in AI?

RAG stands for Retrieval-Augmented Generation. It is an AI framework that enhances large language models by connecting them to external knowledge sources at inference time, allowing the model to retrieve relevant information before generating a response. The term was introduced in a 2020 research paper by Meta AI researchers Patrick Lewis and colleagues.

Is Perplexity AI a RAG system?

Yes. Perplexity AI is architecturally a RAG-native system. Unlike ChatGPT or Claude, which are generative models with RAG as an optional enhancement layer, Perplexity treats real-time web search as its core foundation and AI synthesis as the layer built on top. Every response includes inline citations linked to the live sources retrieved during that session.

Does Claude use RAG?

Claude uses RAG through its Projects feature and long-context document processing. Users upload large document sets or store persistent reference materials in Claude Projects, and Claude retrieves relevant context from those sources when answering questions. Claude’s RAG approach is optimized for document-level retrieval with a very large context window rather than real-time web search by default.

What is the difference between RAG and fine-tuning?

Fine-tuning modifies the model’s internal weights through additional training on domain-specific data. RAG does not change the model at all. It expands what the model can reference at query time by connecting it to an external, updatable knowledge base. RAG is better for frequently changing data. Fine-tuning is better for teaching the model a consistent style, tone, or specialized reasoning pattern.

Can RAG eliminate AI hallucinations completely?

RAG significantly reduces AI hallucinations by grounding responses in retrieved source documents rather than relying on statistical patterns from training data alone. However, RAG does not eliminate hallucinations entirely. If the retrieval step returns irrelevant or low-quality documents, the model may still generate inaccurate answers. The quality of the knowledge base and the retrieval mechanism directly determines the accuracy of RAG output.

What is a vector database and why does RAG need one?

A vector database stores data as numerical embeddings rather than structured rows and columns. When a query is submitted, it is converted to a vector and compared mathematically against stored vectors to find the most semantically similar content. RAG systems use vector databases because semantic search outperforms keyword search for natural language queries. Common vector databases used in RAG systems include Pinecone, Weaviate, Chroma, and pgvector.

Is RAG only for text data?

No. RAG can work with unstructured data such as text and PDFs, semi-structured data like JSON and tables, and structured data including SQL databases and knowledge graphs. Modern enterprise RAG systems retrieve from multiple data types simultaneously, combining keyword search, vector search, and structured database queries in a single retrieval pipeline.

Conclusion: RAG Is the Bridge Between LLM Intelligence and Real-World Knowledge

Retrieval-Augmented Generation is not a future technology. It is the mechanism already powering the AI tools millions of professionals use daily in 2026. Every time Perplexity cites a live source, every time Claude references an uploaded contract, every time ChatGPT’s Deep Research pulls a current article, every time Gemini reads a Google Drive file, RAG is running underneath.

Understanding how each platform implements RAG helps you choose the right tool for the right task. Use Perplexity when you need verified, sourced facts from live web data. Use Claude when you need to work across large documents or persistent knowledge bases. Use ChatGPT when you need a generalist research agent with broad web access. Use Grok when you need real-time social intelligence. Use Gemini when you operate inside the Google Workspace ecosystem.

The organizations and professionals who master RAG, both as users who know how to prompt it effectively and as builders who know how to deploy it, will hold a compounding competitive advantage as AI continues to penetrate every business workflow.